Hi everyone ![]()

You might remember that with our 25.4 release, we officially brought full support for AMD’s powerful data center GPUs (MI300X and MI325X) to our MAX and Mojo platforms. These CDNA3-based GPUs are in our tier 1 of support: fully supported across MAX and Mojo and tested regularly in our CI systems.

But we’ve also been quietly working on something many of you have been asking for: better support for consumer-grade AMD RDNA GPUs.

Thanks to amazing community help, we’ve already made progress! You can now do general Mojo GPU programming on RDNA3 and RDNA4 GPUs, including the integrated Radeon 700M series and discrete Radeon RX 7000 and 9000 series cards.

However, we’re not quite there yet for full MAX model support on RDNA GPUs.

Here’s the gist: Many of our core Mojo kernels on AMD were originally built with CDNA GPUs in mind and aren’t yet compatible with RDNA GPUs, meaning that if you try to run a MAX model on an RDNA GPU, it will likely fail to compile. There are a few important architectural differences between CDNA and RDNA GPUs, including:

- Wavefront size: RDNA GPUs can use either 32 or 64-wide wavefronts (the equivalent of CUDA warps: think of these as groups of threads working together), while CDNA GPUs only use 64-wide wavefronts. By default, RDNA uses 32-wide, and specific flags need to be enabled for them to use 64-wide wavefronts.

- Matrix Cores: CDNA GPUs have dedicated matrix cores, while RDNA GPUs do not. CDNA GPUs use MFMA intrinsics to access these matrix cores, and while RDNA GPUs have WMMA instructions for accelerating matrix multiplication, they do not map directly to the MFMA instructions.

What have we done so far to support RDNA?

Since the 25.4 release, we’ve added some key improvements:

- We’ve adjusted the default wavefront size (

WARP_SIZEin the Mojo standard library) to 32 for RDNA GPUs. - We’ve added special functions (

_is_amd_rdna(),_is_amd_rdna3(), and_is_amd_rdna4()) to allow for specialization of kernel code for RDNA GPUs. - We’ve also started adding WMMA intrinsics for RDNA GPU matrix multiplication.

We need your help to get to the finish line!

There’s still a lot of work to do to get MAX models running at peak performance on RDNA GPUs. This is where you, our awesome community members with RDNA 3 and newer GPUs, can make a huge difference!

Where to start: the flash attention kernel

Currently, one of the biggest roadblocks to running MAX models on RDNA is our flash attention kernel. It was designed for CDNA GPUs and makes assumptions about the shape of operations that don’t hold true for RDNA.

If you have an RDNA 3+ GPU and are interested in diving into kernel development, you can run the following command to build and test the Mojo standard library and kernels, and then run tests against our AMD flash attention kernel:

./bazelw test //max/kernels/test/gpu/nn:test_flash_attention_amd.mojo.test --test_output=all

This command currently fails on RDNA GPUs with a constraint error due to incompatible fragment sizes. (Note: due to a bug that we’re fixing in our Bazel GPU lookups, you may also need to edit this line in common.MODULE.bazel from “780M” to “radeon” to run this test. This is being fixed.)

Your mission: We need to add RDNA-specific code paths within our kernels that provide the correct shapes to the RDNA’s WMMA intrinsics, and port anything else needed from CDNA to RDNA. The goal is for this test to pass cleanly on RDNA 3 and 4 GPUs, just like it does on CDNA today. We of course still need to preserve all of our current CDNA support while doing so.

Everything you need to get started is in our open-source modular repository! You can dig in, experiment, and test your changes directly on your RDNA GPU. There are also other areas within our codebase that need RDNA-specific attention, so if you’re feeling adventurous, you can explore other failing GPU tests on RDNA.

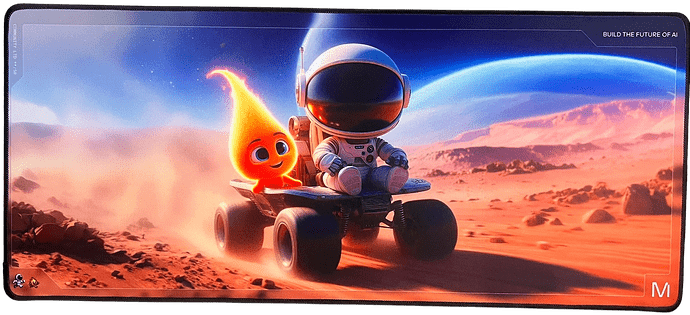

Your contributions would be incredibly valuable! For anyone who makes a contribution in this area that gets merged, we’re sending out an awesome Mojo and MAX branded gamer pad. You’ll also be directly contributing to a system that countless fellow AMD GPU users would benefit from every day! ![]()